WASHINGTON, DC, April 23, 2024 – Oil spills are deadly disasters for ocean ecosystems. They can have lasting impacts on fish and marine mammals for decades and wreak havoc on coastal forests, coral reefs, and the surrounding land. Chemical dispersants are often used to break down oil, but they often increase toxicity in the process.

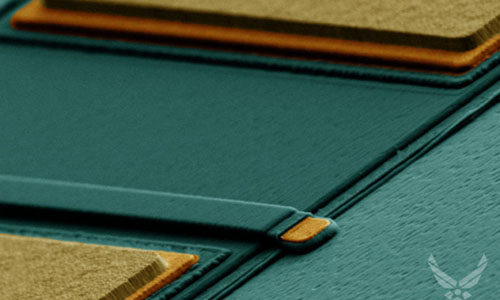

In Applied Physics Letters, by AIP Publishing, researchers from Central South University, Huazhong University of Science and Technology, and Ben-Gurion University of the Negev used laser treatments to transform ordinary cork into a powerful tool for treating oil spills.

They wanted to create a nontoxic, effective oil cleanup solution using materials with a low carbon footprint, but their decision to try cork resulted from a surprising discovery.

“In a different laser experiment, we accidentally found that the wettability of the cork processed using a laser changed significantly, gaining superhydrophobic (water-repelling) and superoleophilic (oil-attracting) properties,” author Yuchun He said. “After appropriately adjusting the processing parameters, the surface of the cork became very dark, which made us realize that it might be an excellent material for photothermal conversion.”

“Combining these results with the eco-friendly, recyclable advantages of cork, we thought of using it for marine oil spill cleanup,” author Kai Yin said. “To our knowledge, no one else has tried using cork for cleaning up marine oil spills.”

“Oil recovery is a complex and systematic task, and participating in oil recovery throughout its entire life cycle is our goal.”

—Yuchun He